资源配置

概念解释

- ContainerWorkes: 一组map/reduce容器,相对于Tez作业中而言,是很多map或者reduce容器的组合。

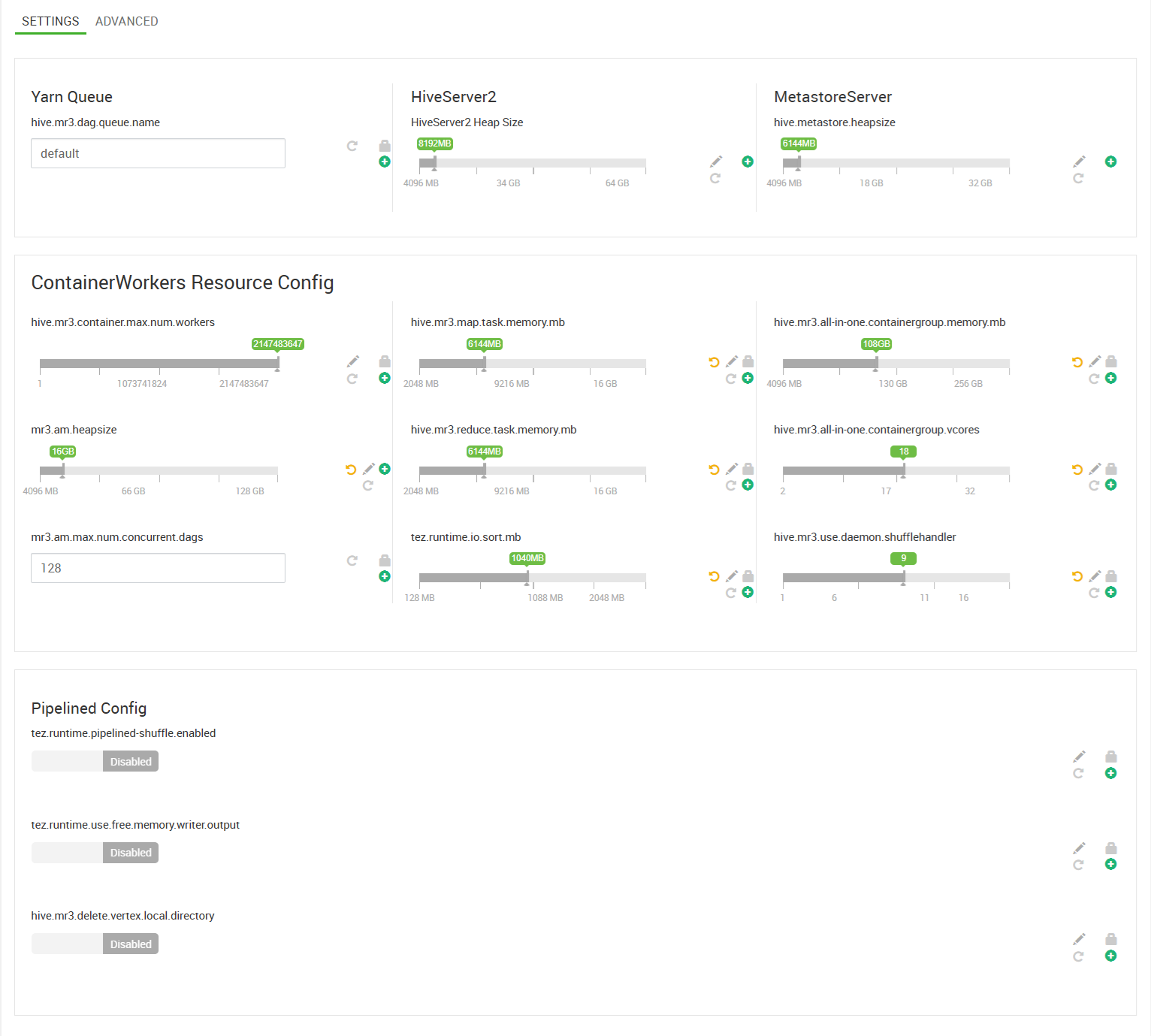

关键参数配置

与资源相关的参数,集中在Ambari中Setting Tab页面。

根据集群实际情况调整

需要根据Yarn资源配置和集群队列资源规划调整.

参数列表

| 配置文件 | 参数项 | 默认值(HDP) | 调整建议 | 其他说明 |

|---|---|---|---|---|

| hiveplus-hive-site | hive.mr3.container.max.num.workers | 2147483647 | 最大的worker(containergroup)数量,用于限制资源,如确需调整,建议设置为NodeManger节点的倍数 | |

| hiveplus-hive-site | mr3.am.heapsize | 16384 | Yarn Application manager(Driver)的内存参数,并发任务越多,需要越多 | |

| hiveplus-hive-site | mr3.am.max.num.concurrent.dags | 16384 | 并发SQL越多,越大,一般不需要调整 | |

| hiveplus-hive-site | hive.mr3.map.task.memory.mb | 6144 | 分配给每个 mapper 的内存(MB) | |

| hiveplus-hive-site | hive.mr3.reduce.task.memory.mb | 6144 | 分配给每个 reducer 的内存(MB) | |

| hiveplus-tez-site | tez.runtime.io.sort.mb | 1040 | 不应超过hive.mr3.reduce.task.memory.mb的1/2,太大会报错 | |

| hiveplus-hive-site | hive.mr3.all-in-one.containergroup.memory.mb | 110592 | 分配给每个 ContainerGroup 的内存(MB),不能超过NodeManger内存。 | |

| hiveplus-hive-site | hive.mr3.all-in-one.containergroup.vcores | 18 | 分配给每个 ContainerGroup 的核心数,,不能超过NodeManger的Cpu数量。 | |

| hiveplus-hive-site | hive.mr3.use.daemon.shufflehandler | 9 | hive.mr3.all-in-one.containergroup.vcores的一半,一般向上取整 |

参数关系

| 参数项 | 关系 | 参数项 | 其他说明 |

|---|---|---|---|

| hive.mr3.map.task.memory.mb * hive.mr3.all-in-one.containergroup.vcores | ≤ | hive.mr3.all-in-one.containergroup.memory.mb | |

| hive.mr3.reduce.task.memory.mb * hive.mr3.all-in-one.containergroup.vcores | ≤ | hive.mr3.all-in-one.containergroup.memory.mb | |

| hive.mr3.use.daemon.shufflehandler | = | hive.mr3.all-in-one.containergroup.vcores/2 | 取整,一般向上取整,也可以向下。 |

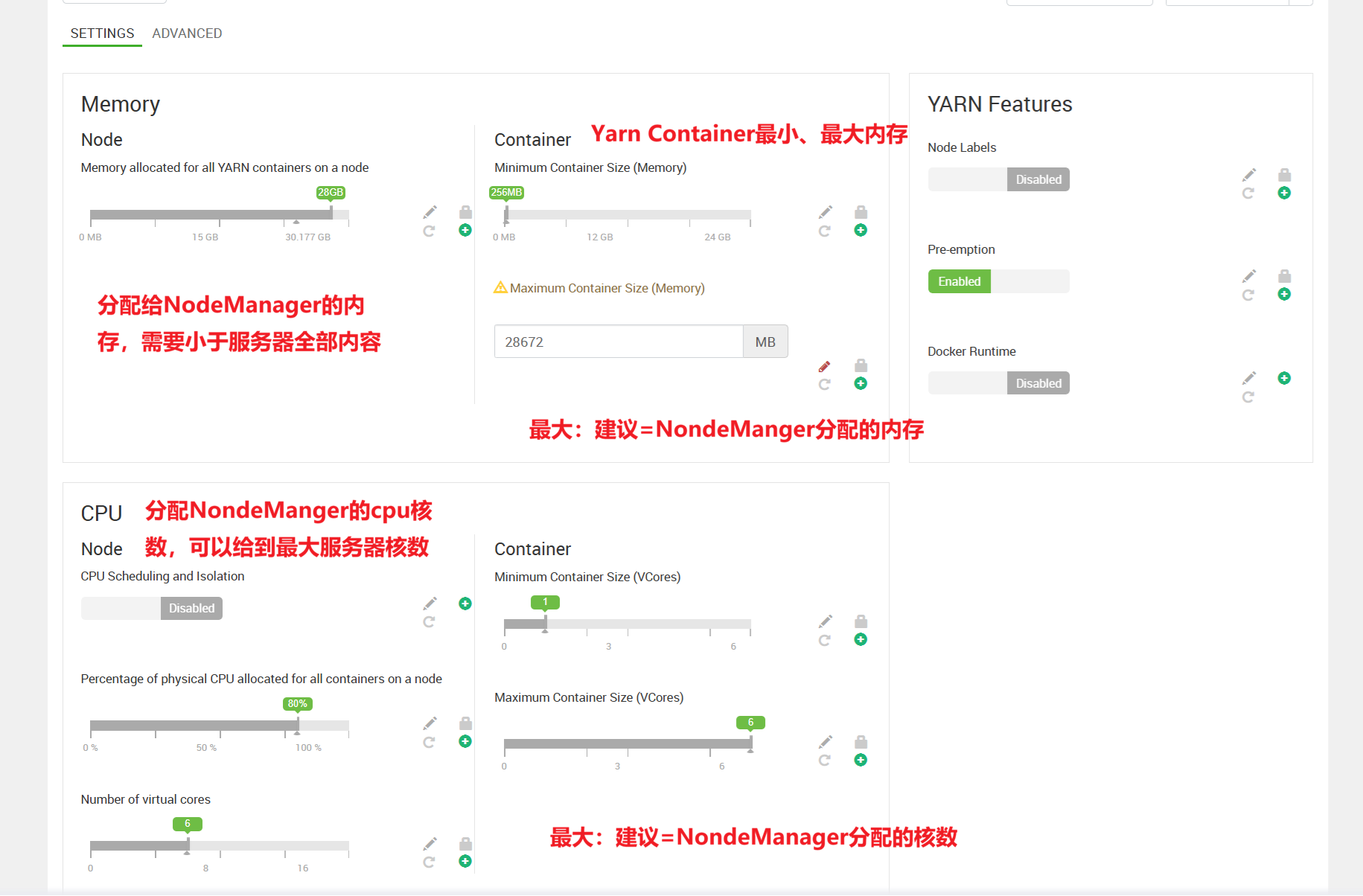

与Yarn参数关系

| 参数项 | 关系 | Yarn参数项 | 其他说明 |

|---|---|---|---|

| hive.mr3.all-in-one.containergroup.memory.mb | ≤ | yarn.scheduler.maximum-allocation-m | |

| hive.mr3.all-in-one.containergroup.memory.mb | ≤ | yarn.scheduler.minimum-allocation-vcores |

参数配置不当导致的报错

如果内存不满足条件,执行Tez任务时将会产生如下报错:

0: jdbc:hive2://datanode01:9842> select distinct id from t1;

INFO : Compiling command(queryId=hive_20260427030752_cbe0a0bc-032f-4a31-be33-6e6c139f0626): select distinct id from t1

INFO : Semantic Analysis Completed (retrial = false)

INFO : Returning Hive schema: Schema(fieldSchemas:[FieldSchema(name:id, type:int, comment:null)], properties:null)

INFO : Completed compiling command(queryId=hive_20260427030752_cbe0a0bc-032f-4a31-be33-6e6c139f0626); Time taken: 1.651 seconds

INFO : Executing command(queryId=hive_20260427030752_cbe0a0bc-032f-4a31-be33-6e6c139f0626): select distinct id from t1

INFO : Query ID = hive_20260427030752_cbe0a0bc-032f-4a31-be33-6e6c139f0626

INFO : Total jobs = 1

INFO : Launching Job 1 out of 1

INFO : Starting task [Stage-1:MAPRED] in serial mode

INFO : MR3Task.execute(): hive_20260427030752_cbe0a0bc-032f-4a31-be33-6e6c139f0626:1

INFO : Starting MR3 Session...

INFO : Finished building DAG, now submitting: hive_20260427030752_cbe0a0bc-032f-4a31-be33-6e6c139f0626:1

ERROR : FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.tez.TezTask. com.datamonad.mr3.api.common.MR3Exception: DAGClientRPC failure

at com.datamonad.mr3.client.DAGClientRPC.rpc(DAGClientRPC.scala:310)

at com.datamonad.mr3.client.DAGClientRPC.submitDag(DAGClientRPC.scala:194)

at com.datamonad.mr3.client.MR3SessionClientImpl.submitDag(MR3SessionClientImpl.scala:118)

at org.apache.hadoop.hive.ql.exec.mr3.HiveMR3ClientImpl.submitDag(HiveMR3ClientImpl.java:115)

at org.apache.hadoop.hive.ql.exec.mr3.session.MR3SessionImpl.submit(MR3SessionImpl.java:369)

at org.apache.hadoop.hive.ql.exec.mr3.MR3Task.execute(MR3Task.java:167)

at org.apache.hadoop.hive.ql.exec.tez.TezTask.executeMr3(TezTask.java:148)

at org.apache.hadoop.hive.ql.exec.tez.TezTask.execute(TezTask.java:136)

at org.apache.hadoop.hive.ql.exec.Task.executeTask(Task.java:212)

at org.apache.hadoop.hive.ql.exec.TaskRunner.runSequential(TaskRunner.java:101)

at org.apache.hadoop.hive.ql.Driver.launchTask(Driver.java:2672)

at org.apache.hadoop.hive.ql.Driver.execute(Driver.java:2351)

at org.apache.hadoop.hive.ql.Driver.runInternal(Driver.java:2029)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1729)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1723)

at org.apache.hadoop.hive.ql.reexec.ReExecDriver.run(ReExecDriver.java:157)

at org.apache.hive.service.cli.operation.SQLOperation.runQuery(SQLOperation.java:231)

at org.apache.hive.service.cli.operation.SQLOperation$BackgroundWork$1.run(SQLOperation.java:347)

at java.base/java.security.AccessController.doPrivileged(AccessController.java:712)

at java.base/javax.security.auth.Subject.doAs(Subject.java:439)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1899)

at org.apache.hive.service.cli.operation.SQLOperation$BackgroundWork.run(SQLOperation.java:365)

at java.base/java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:539)

at java.base/java.util.concurrent.FutureTask.run(FutureTask.java:264)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1136)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:635)

at java.base/java.lang.Thread.run(Thread.java:840)

Caused by: org.apache.hadoop.ipc.RemoteException(com.datamonad.mr3.api.common.MR3Exception): DAGProto failure hive_20260427030752_cbe0a0bc-032f-4a31-be33-6e6c139f0626:1

at com.datamonad.mr3.master.DAGAppMaster.startDag(DAGAppMaster.scala:2043)

at com.datamonad.mr3.master.DAGAppMaster.submitDag(DAGAppMaster.scala:1844)

at com.datamonad.mr3.RunningAppContext.submitDag(AppContext.scala:303)

at com.datamonad.mr3.master.DAGClientHandler.submitDag(DAGClientHandler.scala:279)

at com.datamonad.mr3.master.DAGClientHandlerProtocolServer.submitDag(DAGClientHandlerProtocolServer.scala:185)

at com.datamonad.mr3.client.DAGClientHandlerProtocolRPC$DAGClientHandlerProtocol$2.callBlockingMethod(DAGClientHandlerProtocolRPC.java:26654)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server.processCall(ProtobufRpcEngine.java:484)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine2.java:595)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine2.java:573)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:1227)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:1094)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:1017)

at java.base/java.security.AccessController.doPrivileged(AccessController.java:712)

at java.base/javax.security.auth.Subject.doAs(Subject.java:439)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1899)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:3048)

Caused by: java.lang.IllegalArgumentException: requirement failed: Container resource too large: ContainerGroup All-In-One, [110592MB, 18]

at scala.Predef$.require(Predef.scala:281)

at com.datamonad.mr3.dag.ContainerGroup.<init>(ContainerGroup.scala:417)

at com.datamonad.mr3.dag.ContainerGroup$.createContainerGroup(ContainerGroup.scala:226)

at com.datamonad.mr3.dag.ContainerGroup$.getOrCreateContainerGroup(ContainerGroup.scala:108)

at com.datamonad.mr3.dag.DAGImpl.$anonfun$getLocalContainerGroups$5(DAG.scala:842)

at scala.collection.TraversableLike.$anonfun$map$1(TraversableLike.scala:286)

at scala.collection.Iterator.foreach(Iterator.scala:943)

at scala.collection.Iterator.foreach$(Iterator.scala:943)

at scala.collection.AbstractIterator.foreach(Iterator.scala:1431)

at scala.collection.IterableLike.foreach(IterableLike.scala:74)

at scala.collection.IterableLike.foreach$(IterableLike.scala:73)

at scala.collection.AbstractIterable.foreach(Iterable.scala:56)

at scala.collection.TraversableLike.map(TraversableLike.scala:286)

at scala.collection.TraversableLike.map$(TraversableLike.scala:279)

at scala.collection.AbstractTraversable.map(Traversable.scala:108)

at com.datamonad.mr3.dag.DAGImpl.getLocalContainerGroups(DAG.scala:838)

at com.datamonad.mr3.dag.DAGImpl.createVertexEdge(DAG.scala:732)

at com.datamonad.mr3.dag.DAGImpl.initDag(DAG.scala:509)

at com.datamonad.mr3.dag.DAGImpl.initialize(DAG.scala:473)

at com.datamonad.mr3.master.DAGAppMaster$$anon$27.run(DAGAppMaster.scala:2104)

at com.datamonad.mr3.master.DAGAppMaster$$anon$27.run(DAGAppMaster.scala:2102)

at java.base/java.security.AccessController.doPrivileged(AccessController.java:712)

at java.base/javax.security.auth.Subject.doAs(Subject.java:439)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1899)

at com.datamonad.mr3.master.DAGAppMaster.$anonfun$initializeDag$1(DAGAppMaster.scala:2102)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at com.datamonad.mr3.common.Utils$MR3ReadWriteLock.write(Utils.scala:119)

at com.datamonad.mr3.master.DAGAppMaster.initializeDag(DAGAppMaster.scala:2102)

at com.datamonad.mr3.master.DAGAppMaster.startDag(DAGAppMaster.scala:2020)

... 15 more

at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1567)

at org.apache.hadoop.ipc.Client.call(Client.java:1513)

at org.apache.hadoop.ipc.Client.call(Client.java:1410)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:250)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:132)

at jdk.proxy2/jdk.proxy2.$Proxy53.submitDag(Unknown Source)

at com.datamonad.mr3.client.DAGClientRPC.$anonfun$submitDag$1(DAGClientRPC.scala:196)

at com.datamonad.mr3.client.DAGClientRPC.rpc(DAGClientRPC.scala:298)

... 26 more

INFO : Completed executing command(queryId=hive_20260427030752_cbe0a0bc-032f-4a31-be33-6e6c139f0626); Time taken: 0.316 seconds

Error: Error while processing statement: FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.tez.TezTask. com.datamonad.mr3.api.common.MR3Exception: DAGClientRPC failure

at com.datamonad.mr3.client.DAGClientRPC.rpc(DAGClientRPC.scala:310)

at com.datamonad.mr3.client.DAGClientRPC.submitDag(DAGClientRPC.scala:194)

at com.datamonad.mr3.client.MR3SessionClientImpl.submitDag(MR3SessionClientImpl.scala:118)

at org.apache.hadoop.hive.ql.exec.mr3.HiveMR3ClientImpl.submitDag(HiveMR3ClientImpl.java:115)

at org.apache.hadoop.hive.ql.exec.mr3.session.MR3SessionImpl.submit(MR3SessionImpl.java:369)

at org.apache.hadoop.hive.ql.exec.mr3.MR3Task.execute(MR3Task.java:167)

at org.apache.hadoop.hive.ql.exec.tez.TezTask.executeMr3(TezTask.java:148)

at org.apache.hadoop.hive.ql.exec.tez.TezTask.execute(TezTask.java:136)

at org.apache.hadoop.hive.ql.exec.Task.executeTask(Task.java:212)

at org.apache.hadoop.hive.ql.exec.TaskRunner.runSequential(TaskRunner.java:101)

at org.apache.hadoop.hive.ql.Driver.launchTask(Driver.java:2672)

at org.apache.hadoop.hive.ql.Driver.execute(Driver.java:2351)

at org.apache.hadoop.hive.ql.Driver.runInternal(Driver.java:2029)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1729)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1723)

at org.apache.hadoop.hive.ql.reexec.ReExecDriver.run(ReExecDriver.java:157)

at org.apache.hive.service.cli.operation.SQLOperation.runQuery(SQLOperation.java:231)

at org.apache.hive.service.cli.operation.SQLOperation$BackgroundWork$1.run(SQLOperation.java:347)

at java.base/java.security.AccessController.doPrivileged(AccessController.java:712)

at java.base/javax.security.auth.Subject.doAs(Subject.java:439)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1899)

at org.apache.hive.service.cli.operation.SQLOperation$BackgroundWork.run(SQLOperation.java:365)

at java.base/java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:539)

at java.base/java.util.concurrent.FutureTask.run(FutureTask.java:264)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1136)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:635)

at java.base/java.lang.Thread.run(Thread.java:840)

Caused by: org.apache.hadoop.ipc.RemoteException(com.datamonad.mr3.api.common.MR3Exception): DAGProto failure hive_20260427030752_cbe0a0bc-032f-4a31-be33-6e6c139f0626:1

at com.datamonad.mr3.master.DAGAppMaster.startDag(DAGAppMaster.scala:2043)

at com.datamonad.mr3.master.DAGAppMaster.submitDag(DAGAppMaster.scala:1844)

at com.datamonad.mr3.RunningAppContext.submitDag(AppContext.scala:303)

at com.datamonad.mr3.master.DAGClientHandler.submitDag(DAGClientHandler.scala:279)

at com.datamonad.mr3.master.DAGClientHandlerProtocolServer.submitDag(DAGClientHandlerProtocolServer.scala:185)

at com.datamonad.mr3.client.DAGClientHandlerProtocolRPC$DAGClientHandlerProtocol$2.callBlockingMethod(DAGClientHandlerProtocolRPC.java:26654)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server.processCall(ProtobufRpcEngine.java:484)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine2.java:595)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine2.java:573)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:1227)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:1094)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:1017)

at java.base/java.security.AccessController.doPrivileged(AccessController.java:712)

at java.base/javax.security.auth.Subject.doAs(Subject.java:439)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1899)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:3048)

Caused by: java.lang.IllegalArgumentException: requirement failed: Container resource too large: ContainerGroup All-In-One, [110592MB, 18]

at scala.Predef$.require(Predef.scala:281)

at com.datamonad.mr3.dag.ContainerGroup.<init>(ContainerGroup.scala:417)

at com.datamonad.mr3.dag.ContainerGroup$.createContainerGroup(ContainerGroup.scala:226)

at com.datamonad.mr3.dag.ContainerGroup$.getOrCreateContainerGroup(ContainerGroup.scala:108)

at com.datamonad.mr3.dag.DAGImpl.$anonfun$getLocalContainerGroups$5(DAG.scala:842)

at scala.collection.TraversableLike.$anonfun$map$1(TraversableLike.scala:286)

at scala.collection.Iterator.foreach(Iterator.scala:943)

at scala.collection.Iterator.foreach$(Iterator.scala:943)

at scala.collection.AbstractIterator.foreach(Iterator.scala:1431)

at scala.collection.IterableLike.foreach(IterableLike.scala:74)

at scala.collection.IterableLike.foreach$(IterableLike.scala:73)

at scala.collection.AbstractIterable.foreach(Iterable.scala:56)

at scala.collection.TraversableLike.map(TraversableLike.scala:286)

at scala.collection.TraversableLike.map$(TraversableLike.scala:279)

at scala.collection.AbstractTraversable.map(Traversable.scala:108)

at com.datamonad.mr3.dag.DAGImpl.getLocalContainerGroups(DAG.scala:838)

at com.datamonad.mr3.dag.DAGImpl.createVertexEdge(DAG.scala:732)

at com.datamonad.mr3.dag.DAGImpl.initDag(DAG.scala:509)

at com.datamonad.mr3.dag.DAGImpl.initialize(DAG.scala:473)

at com.datamonad.mr3.master.DAGAppMaster$$anon$27.run(DAGAppMaster.scala:2104)

at com.datamonad.mr3.master.DAGAppMaster$$anon$27.run(DAGAppMaster.scala:2102)

at java.base/java.security.AccessController.doPrivileged(AccessController.java:712)

at java.base/javax.security.auth.Subject.doAs(Subject.java:439)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1899)

at com.datamonad.mr3.master.DAGAppMaster.$anonfun$initializeDag$1(DAGAppMaster.scala:2102)

at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23)

at com.datamonad.mr3.common.Utils$MR3ReadWriteLock.write(Utils.scala:119)

at com.datamonad.mr3.master.DAGAppMaster.initializeDag(DAGAppMaster.scala:2102)

at com.datamonad.mr3.master.DAGAppMaster.startDag(DAGAppMaster.scala:2020)

... 15 more

at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1567)

at org.apache.hadoop.ipc.Client.call(Client.java:1513)

at org.apache.hadoop.ipc.Client.call(Client.java:1410)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:250)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:132)

at jdk.proxy2/jdk.proxy2.$Proxy53.submitDag(Unknown Source)

at com.datamonad.mr3.client.DAGClientRPC.$anonfun$submitDag$1(DAGClientRPC.scala:196)

at com.datamonad.mr3.client.DAGClientRPC.rpc(DAGClientRPC.scala:298)

... 26 more (state=08S01,code=1)